WHAT IS LOG AGGREGATION?

Regardless of the industry, enterprises generate a huge amount of unstructured and structured log data from network and security devices, traffic monitoring systems, workstations, servers, and more. Log aggregation is the process of consolidating log files into a centralized platform, parsing them into small chunks of data, and structuring them to make their format consistent.

Why Do We Need Log Aggregation?

Logs are crucial for enterprises and IT teams to get an idea about the performance and health of an organization’s IT infrastructure. If there’s an error or an emergency in which you have to refer to logs to troubleshoot issues, you might need direct access or to use SSH to access different systems and servers and inspect log files individually. Log aggregation tools make this easier.

Organizations use various log management and analytics tools to ensure the logs of various events are aggregated, monitored, and accurately analyzed. Log aggregation tools help different teams gather logs and analyze the data from multiple sources. The information from these logs helps in cybersecurity, digital forensic investigations, and meeting compliance mandates.

As the data volume, variety, and complexity continue to grow, however, traditional tools fail to meet the log aggregation needs of modern enterprises. Inefficient log management makes detecting data breaches or compliance lapses difficult. To cope with these challenges, enterprises and startups have started shifting their entire infrastructures, applications, systems, and data to the cloud using modern log management tools like Loggly®, LogDNA, and Fluentd. These tools are invaluable for maintaining, managing, and troubleshooting IT systems, they’re incredibly cost-effective, and they can help you save time.

Best Practices for Log Aggregation

Outlined below are some practices capable of helping you aggregate logs and centralize them without any hassle.

1. Replicate Log Files

Replication is the process of making a separate copy of log files and storing it at a centralized location for easy access. The replication of log files is done using cron and rsync daemons on a Linux server. Because cron scheduling executes tasks at specific intervals, you don’t get real-time insights into your log files.

2. Syslog, rsyslog, or syslog-ng

Syslog, rsyslog, and syslog-ng are simple yet standardized ways to send log data entries as messages to a central location via a logging server. To run the process smoothly, you need to set up a central syslog daemon (a computer program running as a background process) on your network. All you have to do is make sure your central syslog server is always available and scalable.

3. Choose Open-Source or Commercial Aggregation Tools

Using dedicated tools is another way to manage and aggregate your logs. You can choose either an open-source or a commercial tool, depending on your requirements. Although most tools rely on common standards for log collection and centralization, they differ in features, storage capacity, cost, and monitoring capabilities.

Top 5 Log Aggregation Tools

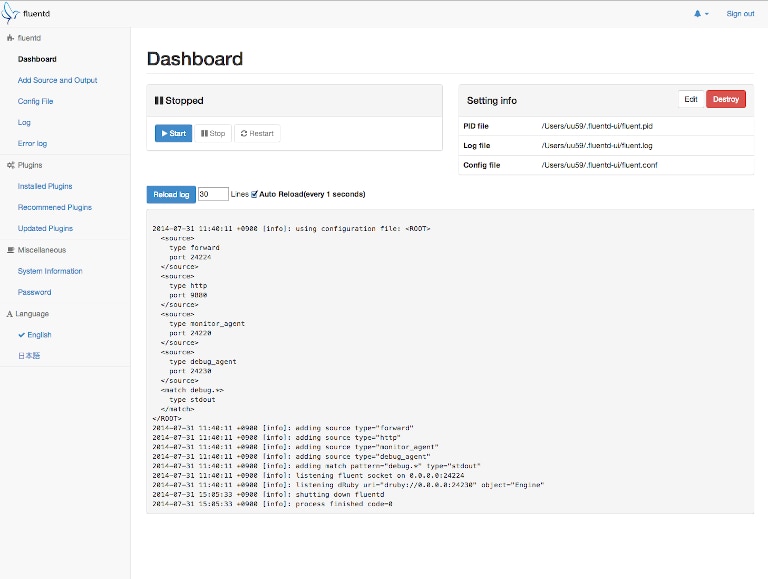

1. Fluentd

Fluentd is an open-source log data collection software designed to separate data sources from the back-end system using a Unified Logging Layer (ULL). Here are some of the software’s major highlights:

- Unified Log Structuring With JSON: Fluentd structures your log data in JSON, allowing you to collect, filter, buffer, and centralize logs from multiple sources (mobile devices, web servers, etc.).

- Flexible Plug-In Architecture: Using Fluentd plug-ins, IT teams can expand their functionalities and easily connect with several data sources and outputs.

- Minimal Resource Requirements: Fluentd is a lightweight log collection tool. It’s written using C language and a Ruby framework, which is why it requires minimal system resources and memory allocation.

- Data Loss Prevention: Fluentd supports robust failover to avoid data loss. It’s an extremely reliable tool in terms of memory allocation and file-based buffering, and it can store data in multiple systems.

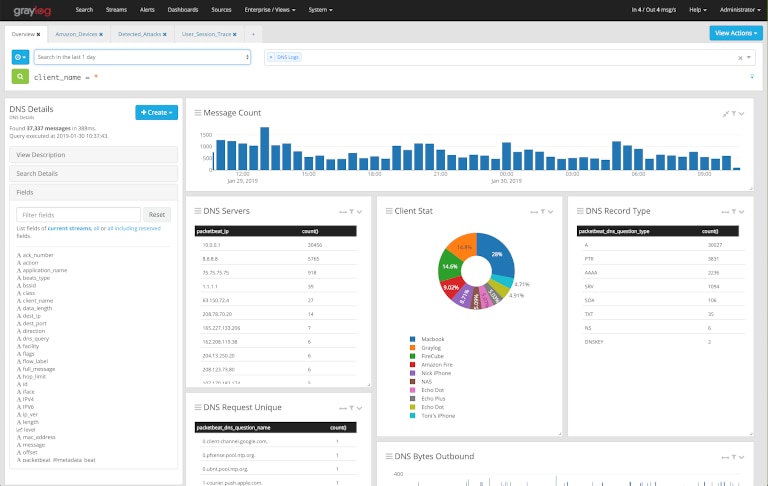

2. Graylog

Graylog is one of the best log data management tools. Along with its log data collection, storage, and analysis capabilities, Graylog is exceptionally cost-effective, scalable, and fast. You can use it as an open-source tool or for commercial purposes. However, both versions differ in their features:

- Graylog Open Source includes features such as dashboards, advanced searches, content packs, and fault tolerance.

- Graylog Enterprise includes all the features of Open Source as well as a correlation engine, event management, views, and reporting.

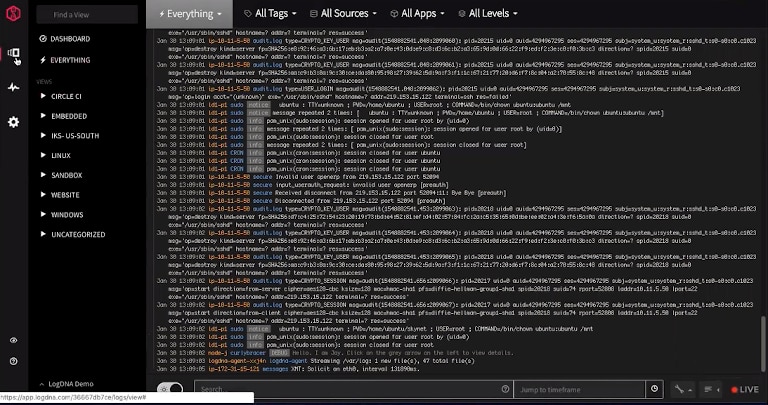

3. LogDNA

LogDNA is an advanced log management and analytics tool capable of quickly managing and aggregating logs from different applications, servers, and devices from any location. Some of the key features the tool offers include custom log parsing, real-time alerts and notifications, Google-like searches, and live tail. LogDNA is one of the top log management tools designed to help you meet a range of security and compliance mandates.

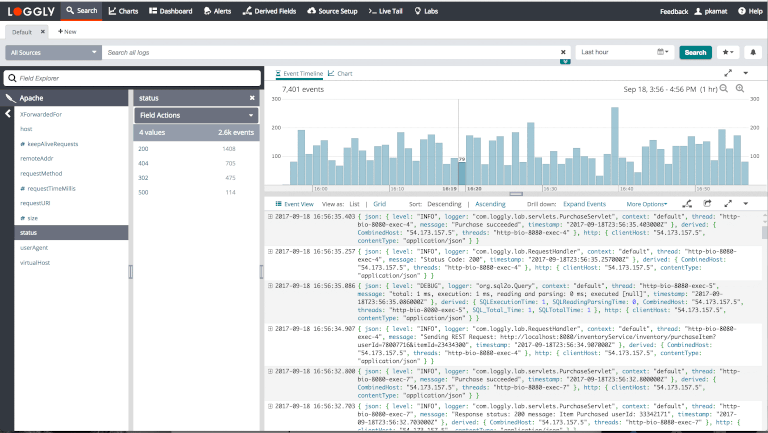

4. Loggly

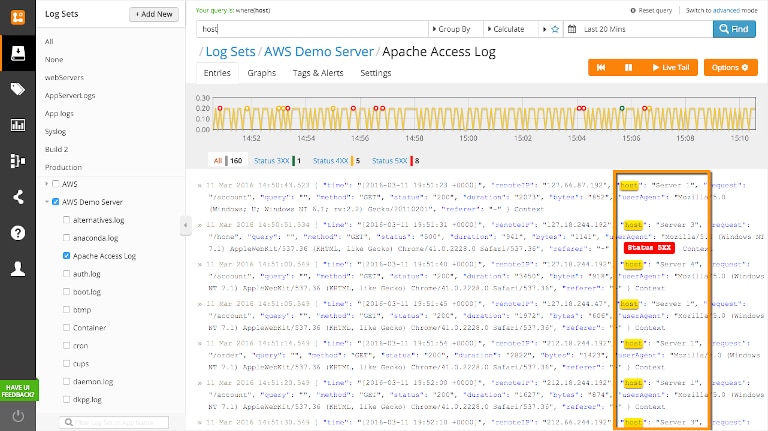

SolarWinds® Loggly is a comprehensive cloud-based log management tool designed to search huge volumes of log data. Loggly supports log aggregation from a wide range of systems, applications, and frameworks. You can upload your logs to the cloud-based service using syslog or HTTP/S. It also aggregates logs from log sources such as NXlog, rsyslog, AWS scripts, Fluentd, Docker, and more. Loggly’s website offers detailed documentation to help you configure your logging setup. In many cases, you can simply copy and paste the script to start collecting logs. Furthermore, as your logs go past their retention periods, Loggly can automatically archive them to Amazon S3 buckets. You can retain these logs for as long as necessary to meet your internal audit or government compliance requirements.

Loggly also offers several features for easy analysis and visualization of logs. You can live tail your logs for real-time visibility, and its intuitive Dynamic Field Explorer™ allows you to click and inspect different fields from parsed logs without typing multiple queries. The tool gives you faster search results, even when you’re analyzing massive log volumes. You can also use out-of-the-box templates to create visual dashboards and monitor critical metrics and events. Moreover, Loggly offers easy integration with tools like GitHub, JIRA, Slack, PagerDuty, and more to enable proactive troubleshooting. You can learn more about Loggly’s features or get a free trial here.

5. Logentries

Logentries is a live log management and analysis tool designed to automatically collect log data in any format and centralize it in a secure location where you can effortlessly search, aggregate, and visualize your log data. It’s best for infrastructure monitoring, application performance monitoring, security, and compliance purposes. Logentries offers the following features:

- Pattern-based alerts

- Inactivity alerts

- Weekly reporting

- Chat tool integration

- Anomaly detection

How to Choose the Best Log Aggregation Tool

It’s challenging to choose a single tool for your log aggregation needs due to the overwhelming number of options. To make an informed decision, all you can do is consider your requirements and look at the features the above tools offer. If you’re opting for an open-source log aggregation tool, make sure it covers basic features like automated parsing, search, filtering, live tail, and alerts.

Although open-source tools might cover your basic log aggregation needs, they often lack sufficient documentation. Additionally, the community support might not offer a quick resolution for configuration or upgrade-related challenges. To get the best log management capabilities and overcome operational challenges, we recommend choosing a commercial cloud-based tool. In our evaluation, Loggly scored highly on ease of implementation, learnability, usability, and performance. Because it’s a cloud-based tool, it offers easy scalability to meet spikes in log volumes, and its flexible pricing meets the varied needs of organizations of all sizes. Furthermore, getting started with Loggly is simple, as it offers a free trial and dedicated support to solve your initial setup and account management challenges.